利用sklearn中的Kmeans对seeds |

您所在的位置:网站首页 › python中kmeans怎么导入数据集 › 利用sklearn中的Kmeans对seeds |

利用sklearn中的Kmeans对seeds

|

目录

前言一、k-means主要步骤二、数据集三、不使用PCA降维1.读入数据2.找簇心3.训练以及评估4.完整代码

四、使用PCA降维五、对比结果

前言

本篇文章是主要讲述利用sklearn库中的Kmeans方法进行分类,对于k-means算法的原理不做过多介绍 一、k-means主要步骤step1:选定要聚类的类别数目k(如上例的k=3类),选择k个中心点。 step2:针对每个样本点,找到距离其最近的中心点(寻找组织),距离同一中心点最近的点为一个类,这样完成了一次聚类。 step3:判断聚类前后的样本点的类别情况是否相同,如果相同,则算法终止,否则进入step4。 step4:针对每个类别中的样本点,计算这些样本点的中心点,当做该类的新的中心点,继续step2。 上述是实现K-means的基本算法 而sklearn中有Kmeans库就就很方便了 二、数据集

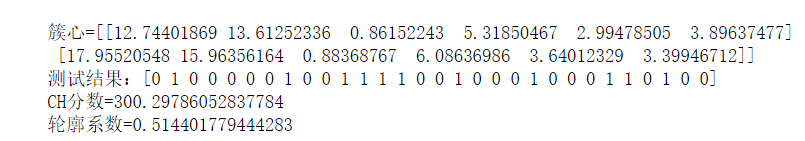

这里有数据集的具体介绍->下载地址 数据和代码也可以到我的GitHub上下载:github 三、不使用PCA降维 1.读入数据代码如下: from pandas import read_csv import numpy as np from sklearn import metrics from sklearn.cluster import KMeans import matplotlib.pyplot as plt filename='D:\datasets\mldata\seeds_dataset.csv' #地址 names = ['area','perimeter','compactness','klength','width','asymmetry','kglength','kind'] re = read_csv(filename,names=names) #读取数据 seed = np.array(re) #转换为numpy数组 #print(seed.shape) np.random.shuffle(seed) #打乱顺序 X_train = seed[:180:,0:6] #分割数据集 X_test = seed[180:,0:6] 2.找簇心 distortions = [] for i in range(1, 40): km = KMeans(n_clusters=i, init='k-means++', n_init=10, max_iter=300, random_state=0) km.fit(X_train) distortions.append(km.inertia_) plt.plot(range(1,40), distortions, marker='o') plt.xlabel('Number of clusters') plt.ylabel('Distortion') plt.show()找簇心可以参考这篇博客:传送门 3.训练以及评估代码如下: kmeans = KMeans(n_clusters=2, random_state=10) #定义三个质心,并拟合质心 kmeans.fit(X_train) #print(kmeans.score(X_test)) #print(kmeans.labels_) #标签 print("簇心={}".format(kmeans.cluster_centers_)) #打印簇心 print("测试结果:{}".format(kmeans.predict(X_test))) #预测样本 print("CH分数={}".format(metrics.calinski_harabasz_score(X_train, kmeans.labels_))) print("轮廓系数={}".format(metrics.silhouette_score(X_train,kmeans.labels_,metric='euclidean'))) 4.完整代码 from pandas import read_csv import numpy as np from sklearn import metrics from sklearn.cluster import KMeans import matplotlib.pyplot as plt filename='D:\datasets\mldata\seeds_dataset.csv' #地址 names = ['area','perimeter','compactness','klength','width','asymmetry','kglength','kind'] re = read_csv(filename,names=names) #读取数据 seed = np.array(re) #转换为numpy数组 #print(seed.shape) np.random.shuffle(seed) #打乱顺序 X_train = seed[:180:,0:6] #分割数据集 X_test = seed[180:,0:6] #print(X_train.shape) #print(X_test.shape) distortions = [] for i in range(1, 40): km = KMeans(n_clusters=i, init='k-means++', n_init=10, max_iter=300, random_state=0) km.fit(X_train) distortions.append(km.inertia_) plt.plot(range(1,40), distortions, marker='o') plt.xlabel('Number of clusters') plt.ylabel('Distortion') plt.show() kmeans = KMeans(n_clusters=2, random_state=10) #定义三个质心,并拟合质心 kmeans.fit(X_train) #print(kmeans.score(X_test)) #print(kmeans.labels_) #标签 print("簇心={}".format(kmeans.cluster_centers_)) #打印簇心 print("测试结果:{}".format(kmeans.predict(X_test))) #预测样本 print("CH分数={}".format(metrics.calinski_harabasz_score(X_train, kmeans.labels_))) print("轮廓系数={}".format(metrics.silhouette_score(X_train,kmeans.labels_,metric='euclidean'))) 四、使用PCA降维代码如下: from pandas import read_csv import numpy as np from sklearn import metrics import matplotlib.pyplot as plt from sklearn.cluster import KMeans from sklearn.decomposition import PCA filename='D:\datasets\mldata\seeds_dataset.csv' #地址 names = ['area','perimeter','compactness','klength','width','asymmetry','kglength','kind'] re = read_csv(filename,names=names) #读取数据 seed = np.array(re) #转换为numpy数组 #print(seed.shape) np.random.shuffle(seed) #打乱顺序 X_train = seed[:180:,0:6] #训练数据 X_test = seed[180:,0:6] #print(X_train.shape) #print(X_test.shape) pca = PCA(0.95) pca.fit(X_train) X_train_reduction = pca.transform(X_train) X_test_reduction = pca.transform(X_test) #print(X_train_reduction.shape) kmeans = KMeans(n_clusters=2, random_state=10) #定义三个质心,并拟合质心 kmeans.fit(X_train_reduction) #print(seed[:,7]) #print(kmeans.score(X_test)) print("簇心={}".format(kmeans.cluster_centers_)) #打印簇心 print("测试结果:{}".format(kmeans.predict(X_test_reduction))) #预测样本 print("CH分数={}".format(metrics.calinski_harabasz_score(X_train_reduction, kmeans.labels_))) print("轮廓系数={}".format(metrics.silhouette_score(X_train_reduction,kmeans.labels_,metric='euclidean'))) plt.scatter(X_train_reduction[:,0],X_train_reduction[:,1],c=kmeans.labels_) plt.show() 五、对比结果不使用PCA: |

【本文地址】

使用PCA:

使用PCA:  结论:降维后对准确率影响并不大

结论:降维后对准确率影响并不大