从源代码编译构建Hive3.1.3 |

您所在的位置:网站首页 › jar包重新编译 › 从源代码编译构建Hive3.1.3 |

从源代码编译构建Hive3.1.3

|

从源代码编译构建Hive3.1.3

编译说明编译Hive3.1.3更改Maven配置下载源码修改项目pom.xml修改hive源码修改说明修改standalone-metastore模块修改ql模块修改spark-client模块修改druid-handler模块修改llap-server模块修改llap-tez模块修改llap-common模块

编译打包异常集合异常1异常2异常3异常4

编译打包成功总结

编译说明

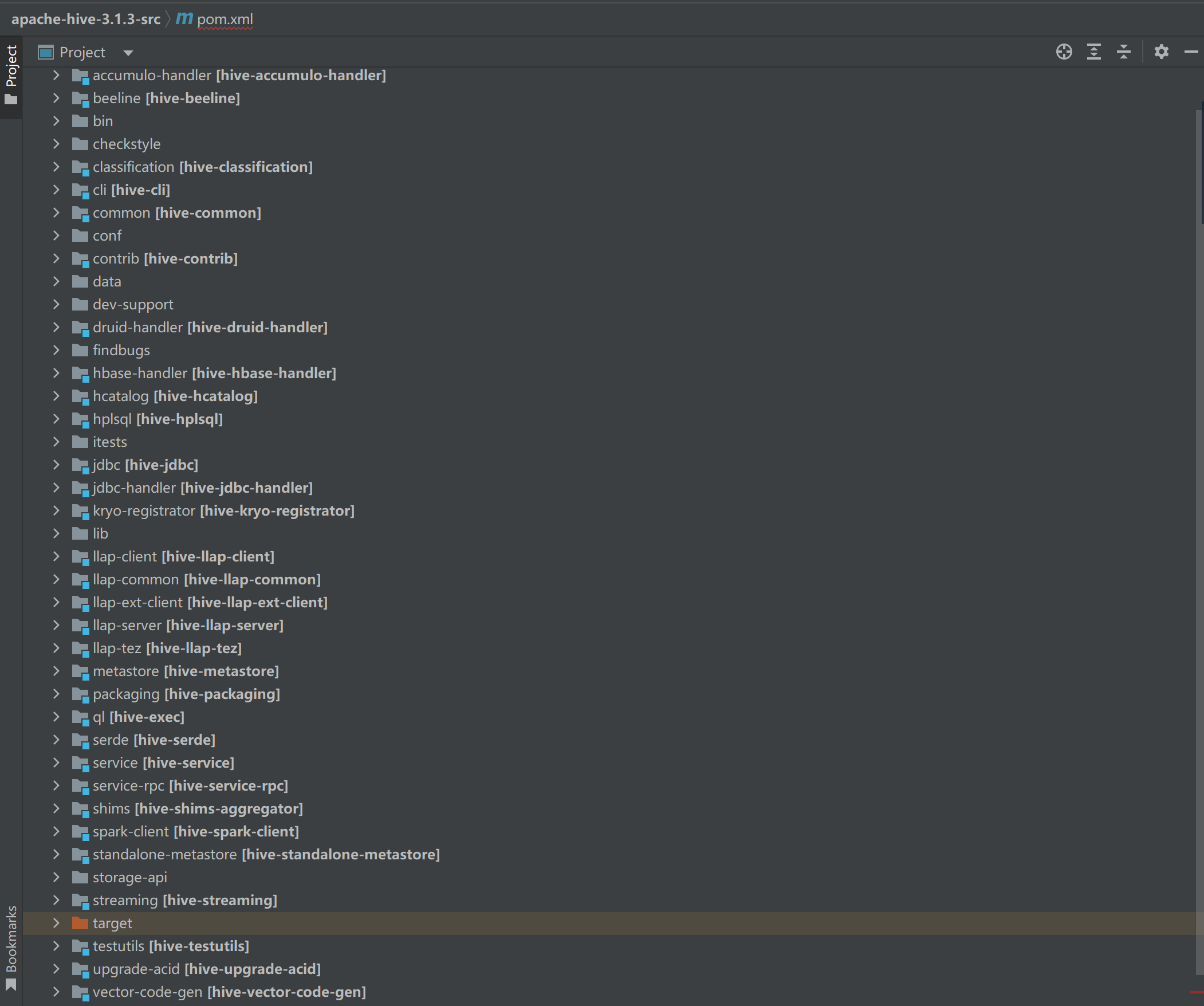

使用Hive官方提供的预编译安装包是最常见和推荐的方式来使用Hive,适用于大多数用户。这些预编译的安装包经过了测试和验证,在许多不同的环境中都能正常运行。 在某些特定情况下,可能需要从源代码编译Hive,而不是使用预编译的安装包。 编译Hive源代码的场景、原因如下: 1.定制配置: 如果希望对Hive进行一些特定的配置定制或修改,例如更改默认的参数设置、添加新的数据存储后端、集成新的执行引擎等,那么编译源代码将能够修改和定制 Hive 的配置。 2.功能扩展: 如果需要扩展Hive的功能,例如添加自定义的 UDF(用户定义函数)、UDAF(用户定义聚合函数)、UDTF(用户定义表生成函数)等,编译源代码将添加和构建这些自定义功能。 3.调试和修改 Bug: 如果在使用Hive过程中遇到了问题,或者发现了bug,并希望进行调试和修复,那么编译源代码将能够获得运行时的源代码,进而进行调试和修改。 4.最新特性和改进: 如果希望使用Hive的最新特性、改进和优化,但这些特性尚未发布到官方的预编译包中,可以从源代码编译最新的版本,以获得并使用这些功能。 5.参与社区贡献: 如果对Hive有兴趣并希望为其开发做贡献,通过编译源代码,可以获取到完整的开发环境,包括构建工具、测试框架和源代码,以便与Hive社区一起开发和贡献代码。 编译Hive3.1.3当使用Spark作为Hive的执行引擎时,但是Hive3.1.3本身支持的Spark版本是2.3,故此需要重新编译Hive,让Hive支持较新版本的Spark。计划编译Hive支持Spark3.4.0,Hadoop版本3.1.3 更改Maven配置更改maven的settings.xml文件,看情况决定是否添加如下仓库地址,仅供参考: aliyun-central 阿里云公共仓库 https://maven.aliyun.com/repository/central * repo central Human Readable Name for this Mirror. https://repo.maven.apache.org/maven2 下载源码下载需要编译的Hive版本源码,这里打算重新编译Hive3.1.3 wget https://archive.apache.org/dist/hive/hive-3.1.3/pache-hive-3.1.3-src.tar.gzIDEA打开pache-hive-3.1.3-src项目,打开项目后肯定会各种爆红,不用管

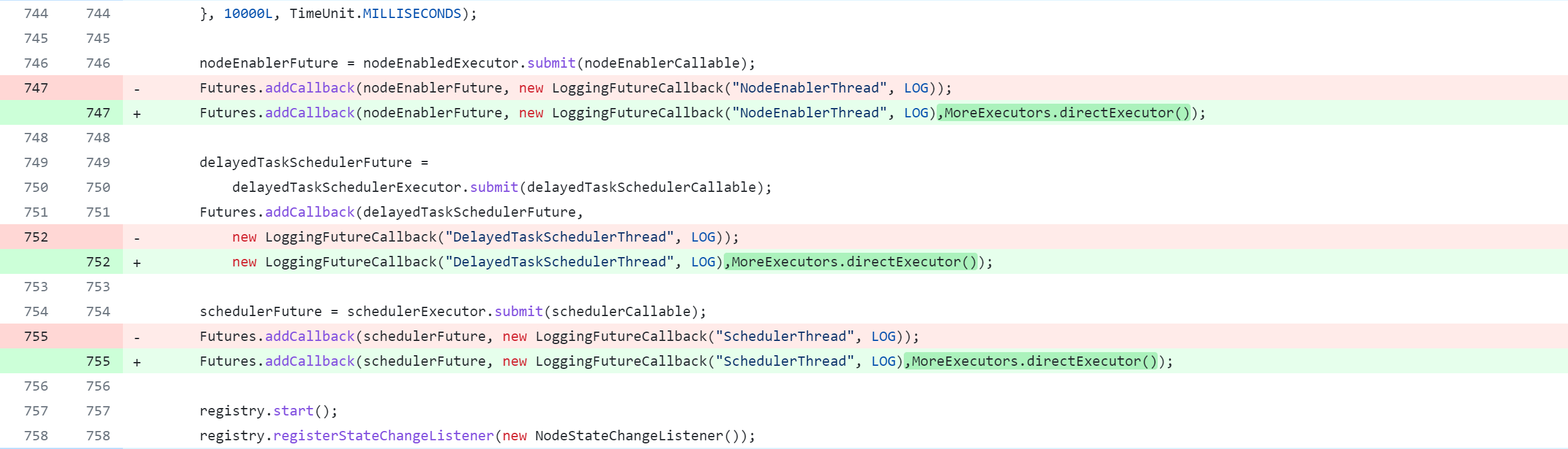

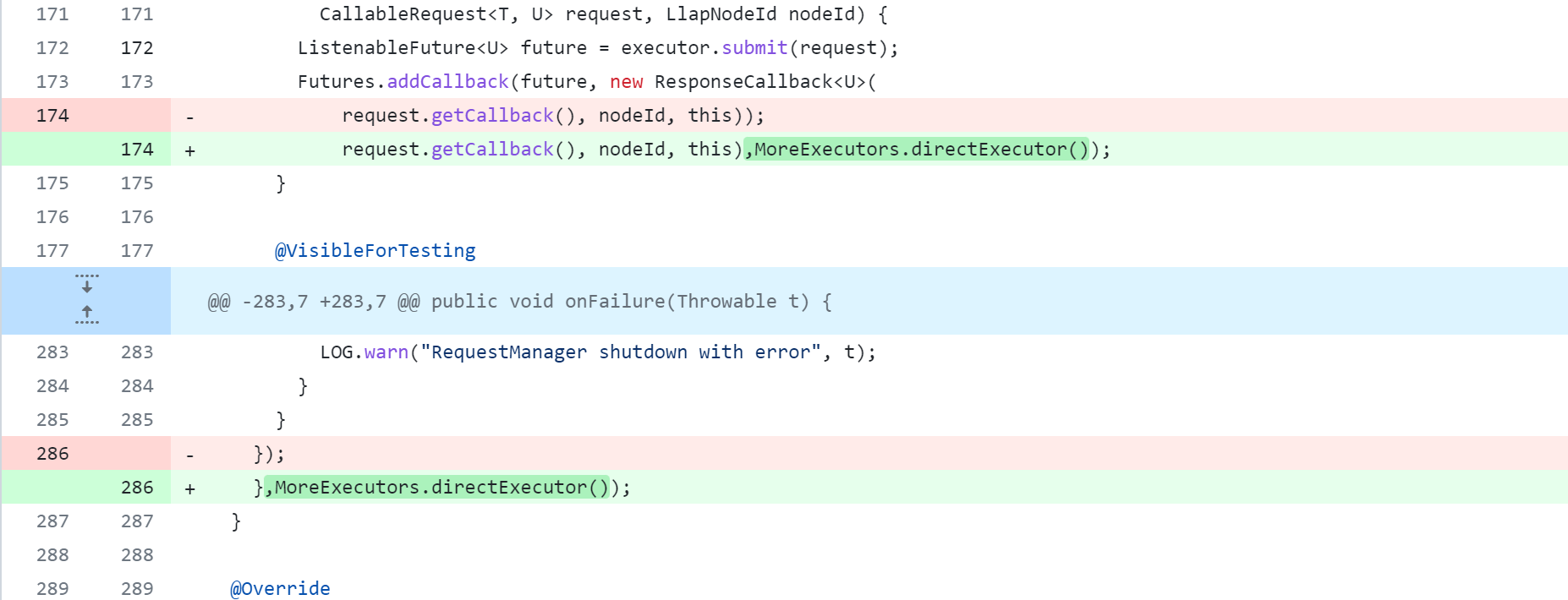

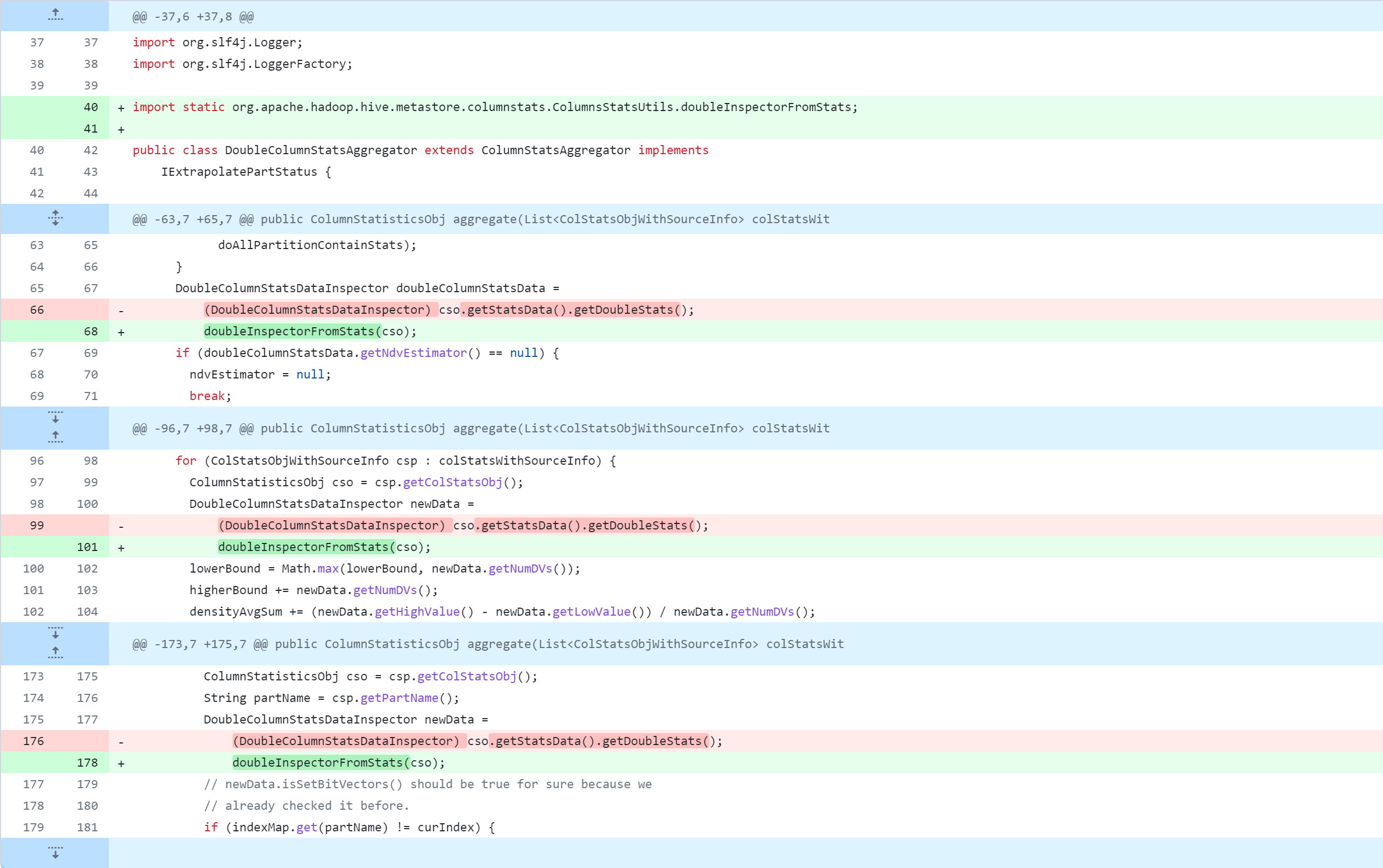

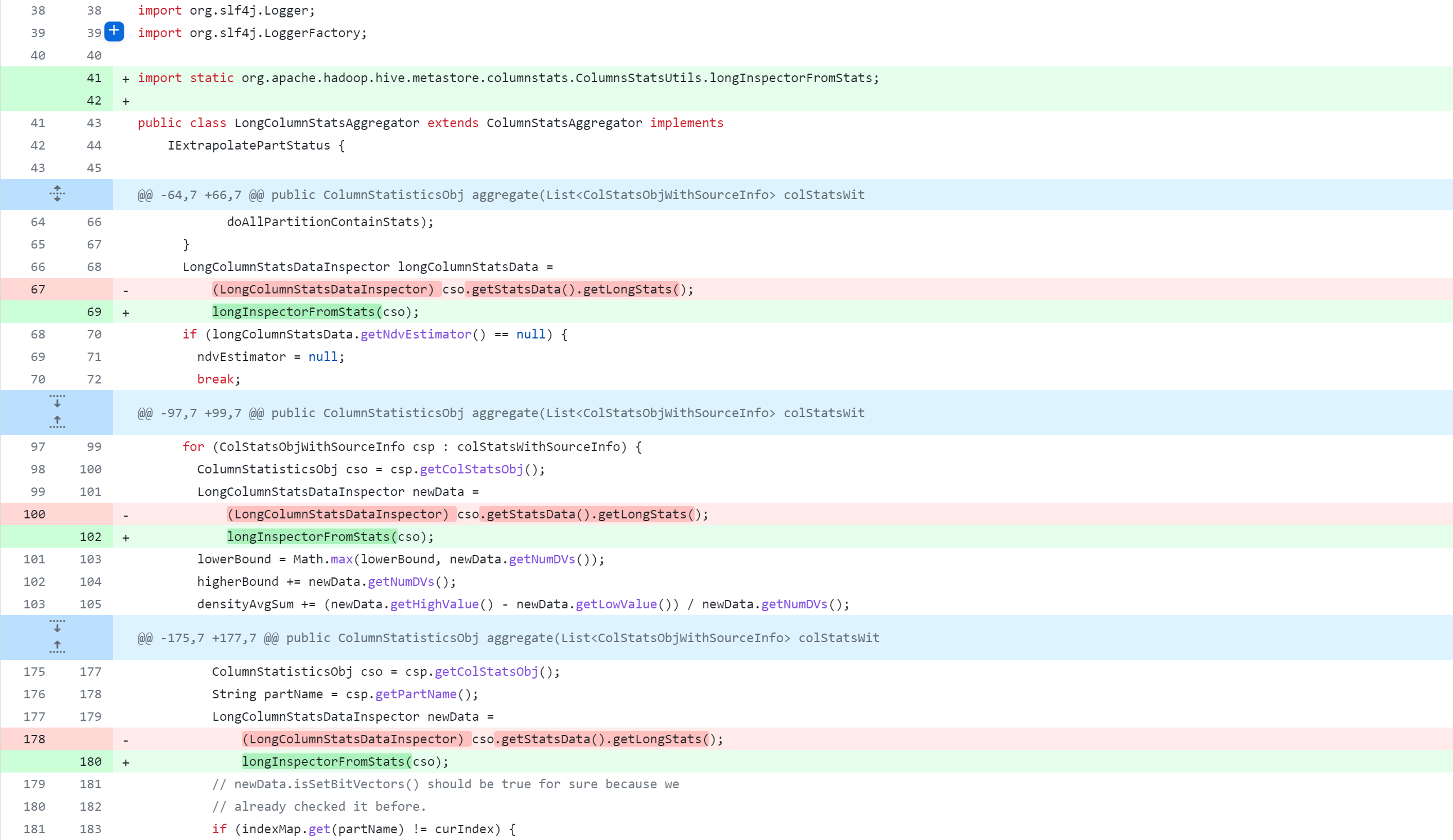

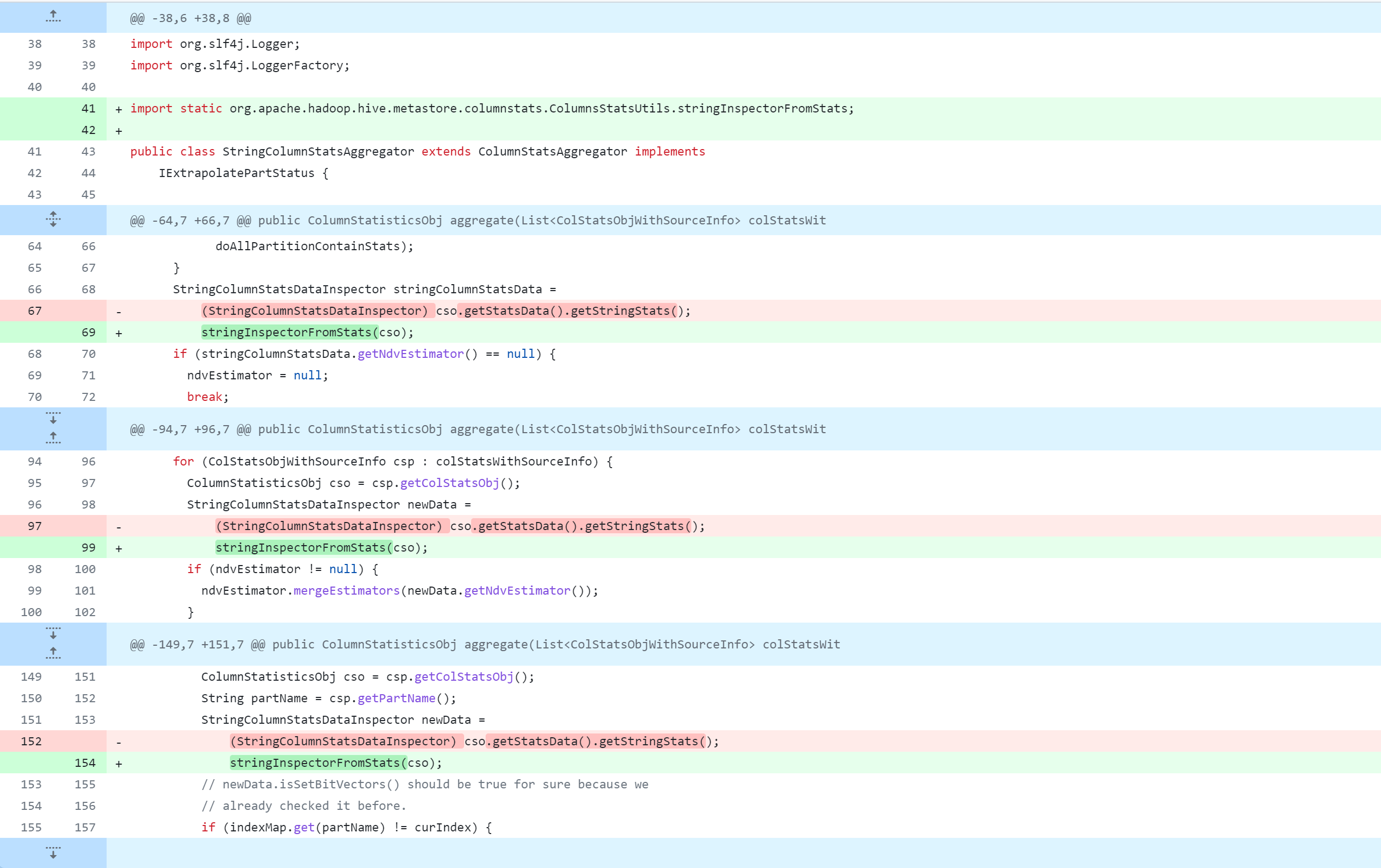

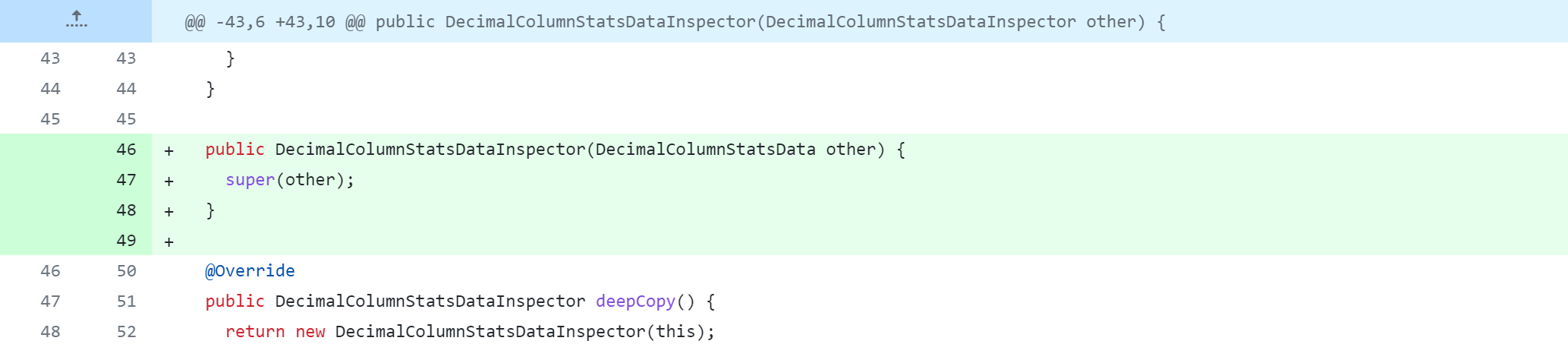

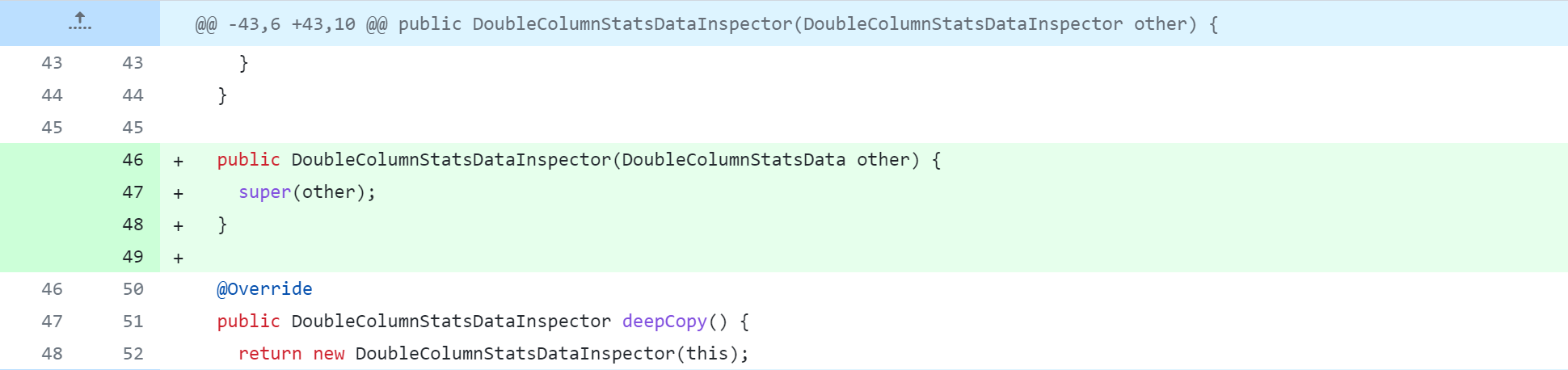

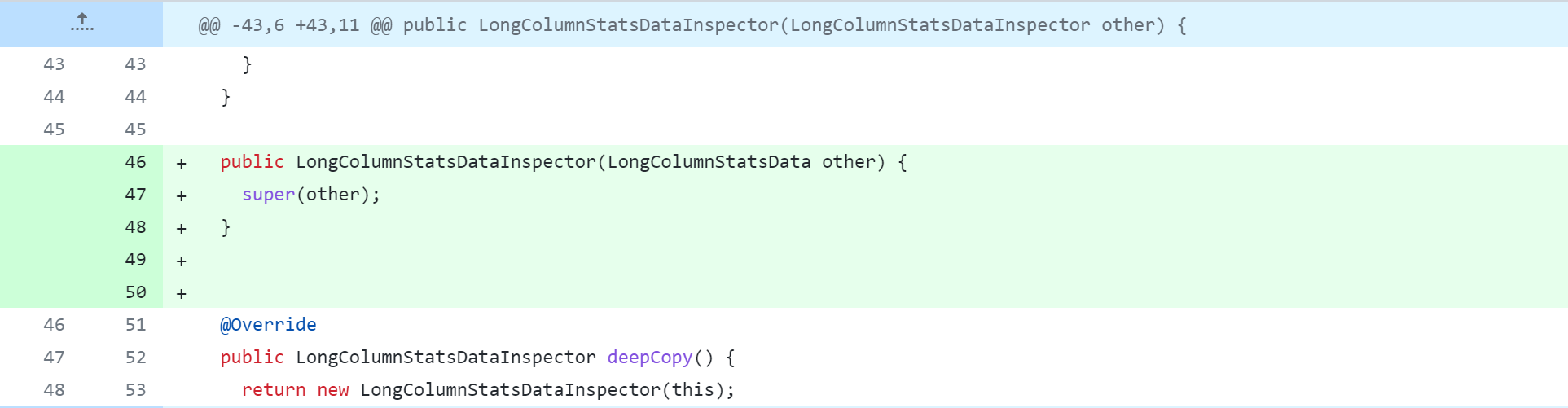

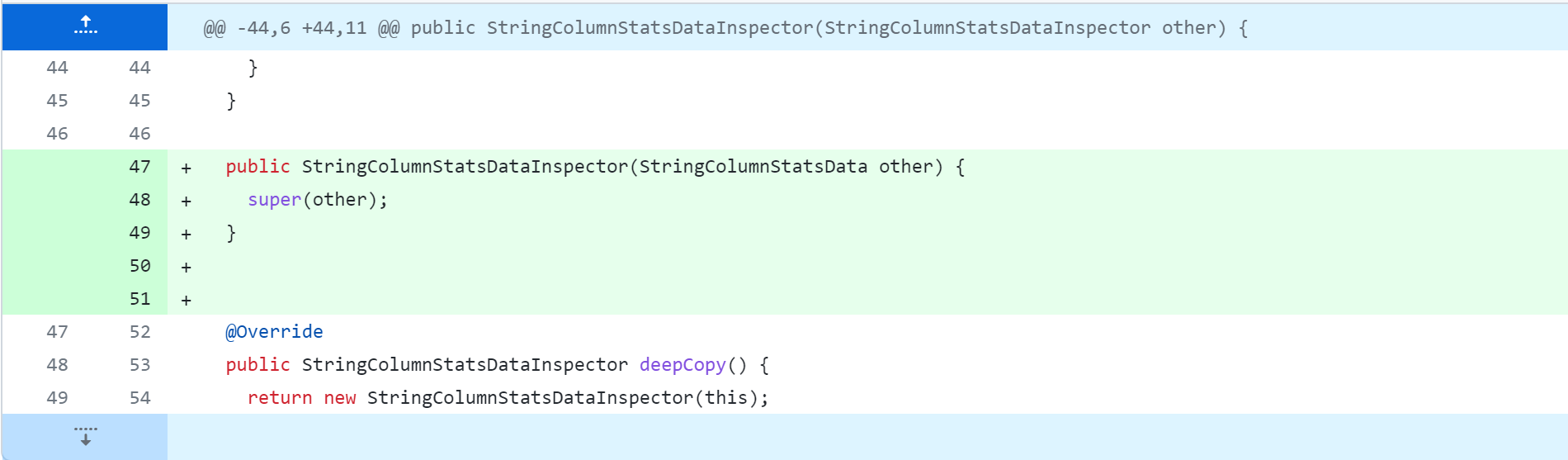

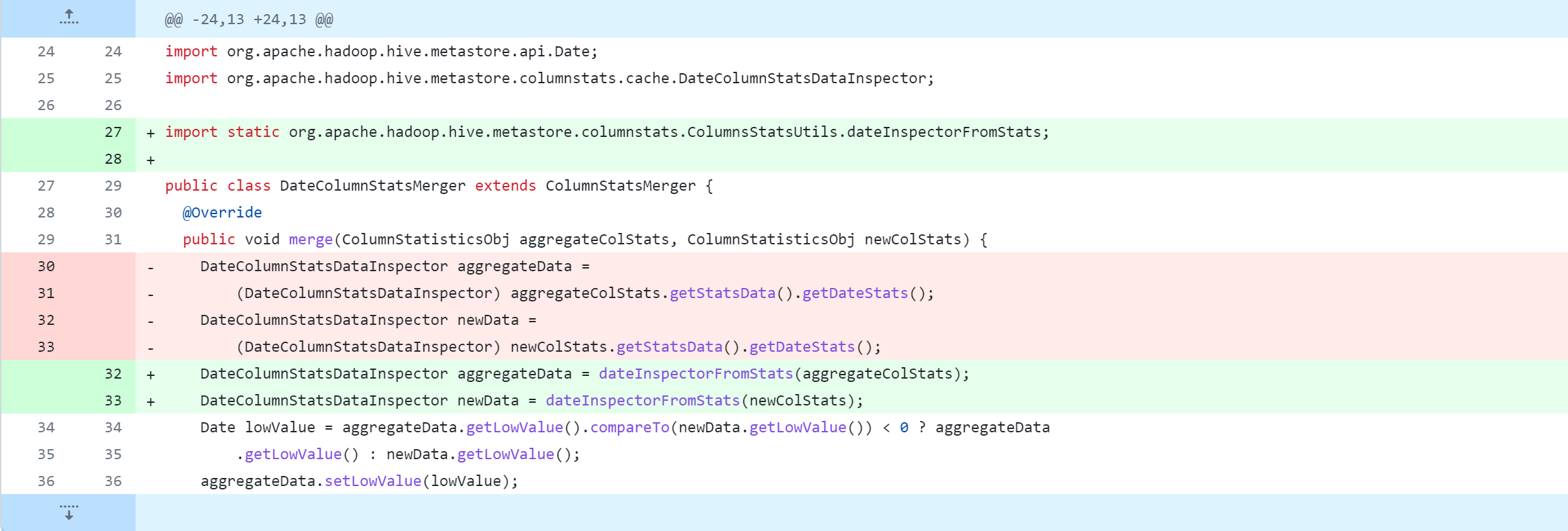

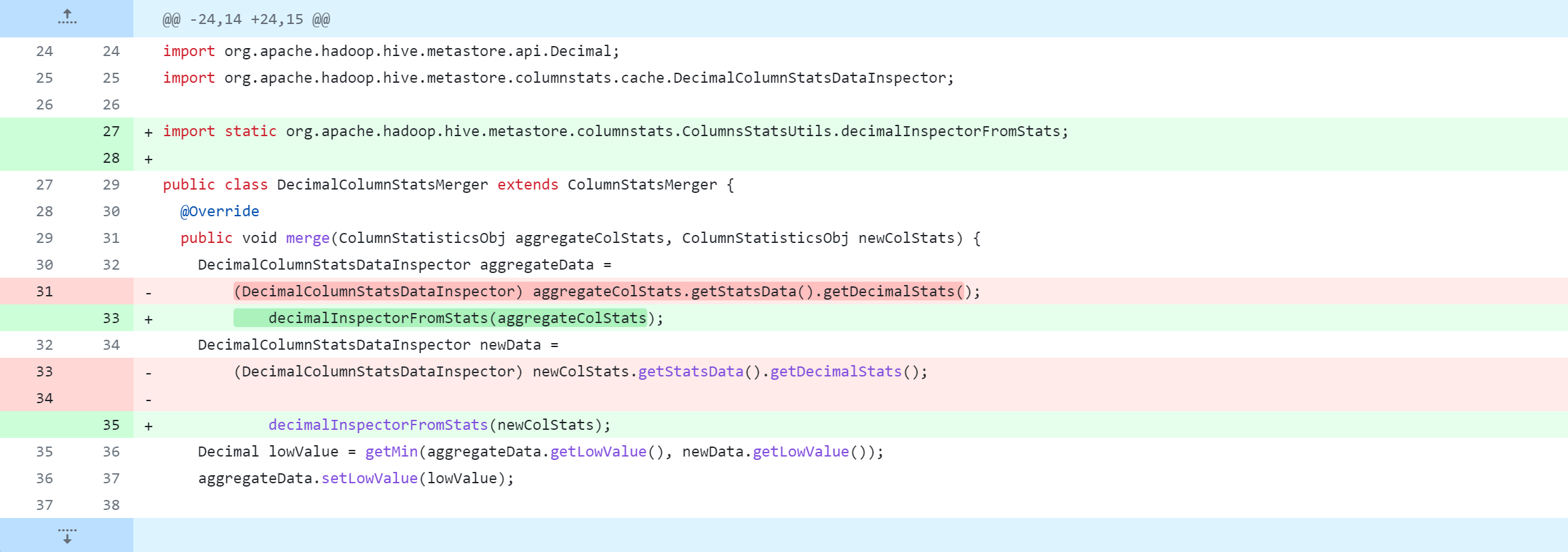

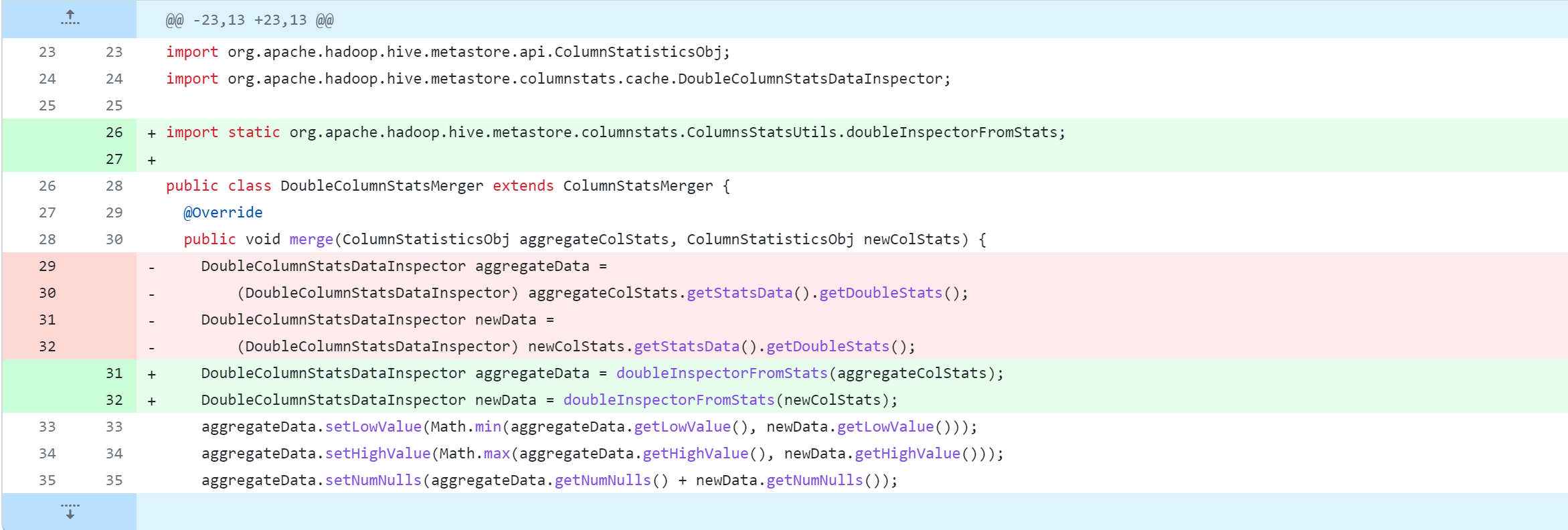

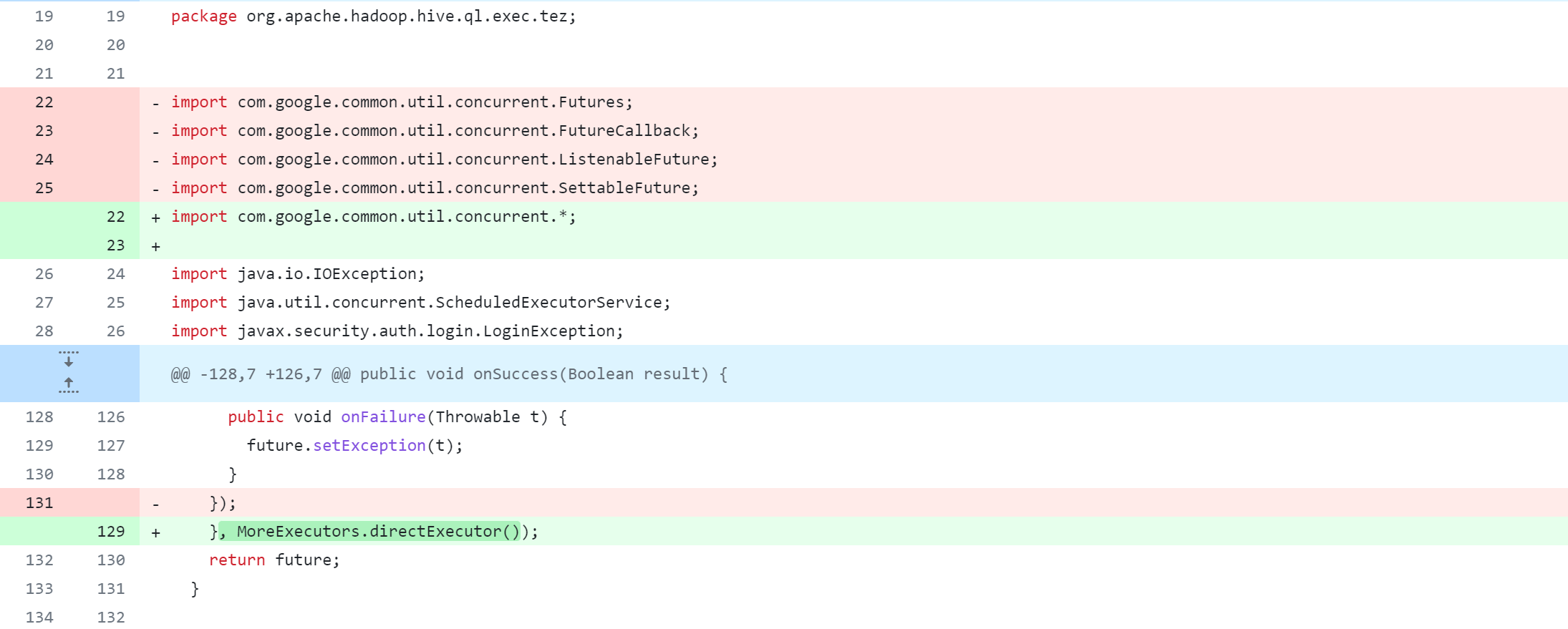

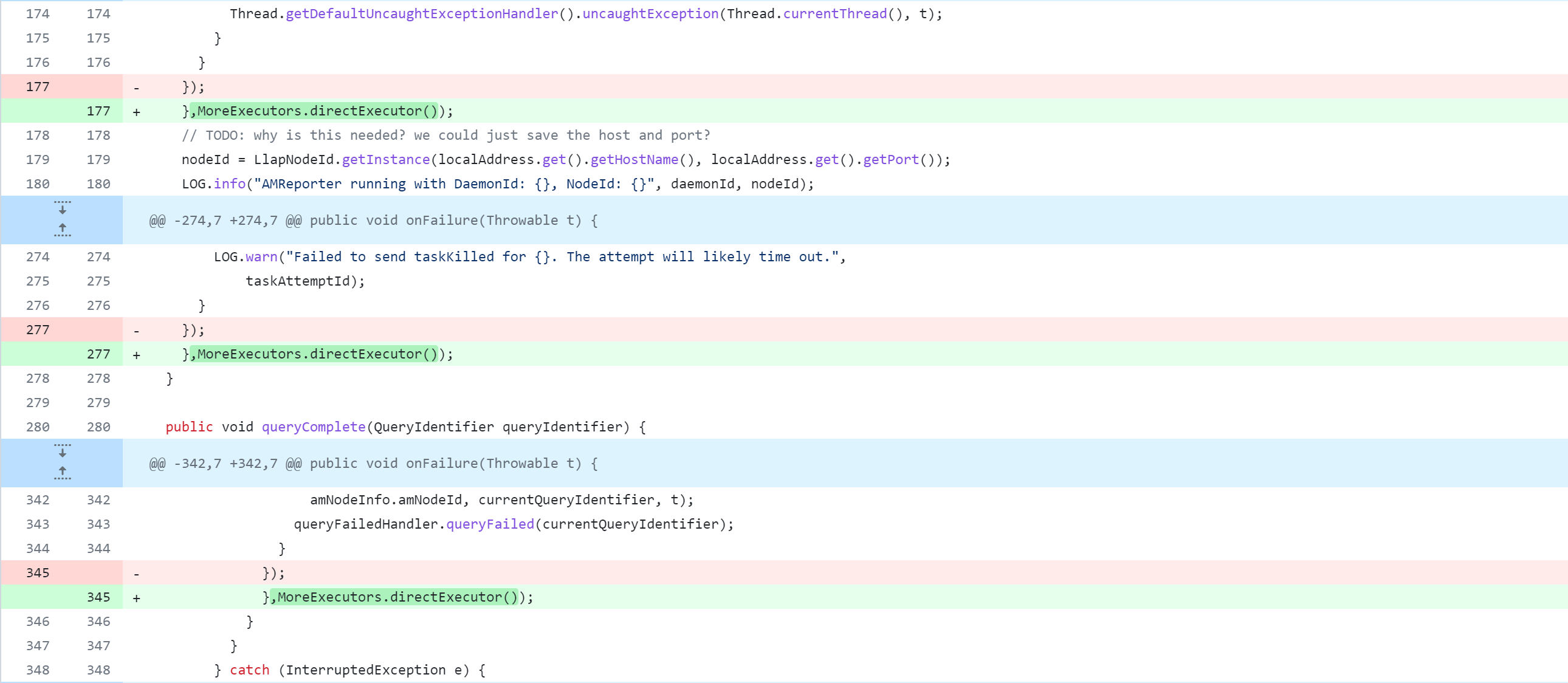

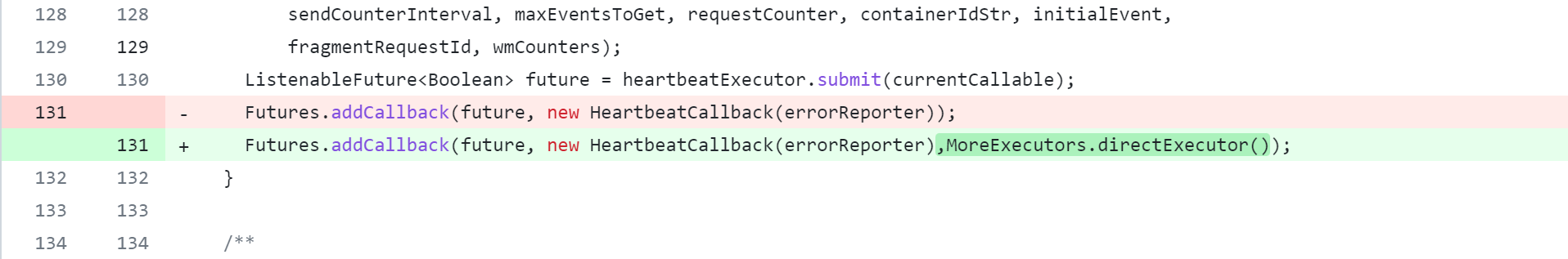

1.修改Hadoop版本 Hive3.1.3支持的Hadoop版本是3.1.10,但是Hive与Hadoop之间记得有个范围支持,故与Hadoop相关的操作看需求是否更改 3.1.0 3.1.3清楚的记得Hadoop3.1.3使用日志版本是1.7.25 1.7.10 1.7.252.修改guava版本 由于Hive运行时会加载Hadoop依赖,因此需要修改Hive中guava版本为Hadoop中的guava版本。这里即使不更改,实则在使用Hive时也可能会进行更换guava版本操作(版本差异不大可以不用更换) 19.0 27.0-jre3.修改spark版本 Hive3.1.3默认支持的Spark是2.3.0,这步也是核心,使其支持Spark3.4.0,使用版本较新,看需求适当降低。另外,明确指定Spark3.4.0使用的是Scala2.13版本,一同修改 2.3.0 2.11 2.11.8 # 原计划编译spark3.4.0 特么的太多坑了 后面不得不放弃 3.4.0 2.12 2.12.17 # 掉坑里折腾惨了,降低spark版本 3.2.4 2.12 2.12.17 修改hive源码 修改说明修改Hive源代码,会对其进行删除、修改、新增操作,下图是Git版本控制对比图,大家应该都能看懂吧。但还是说明一下:-:删除该行代码 +:新增、修改该行代码

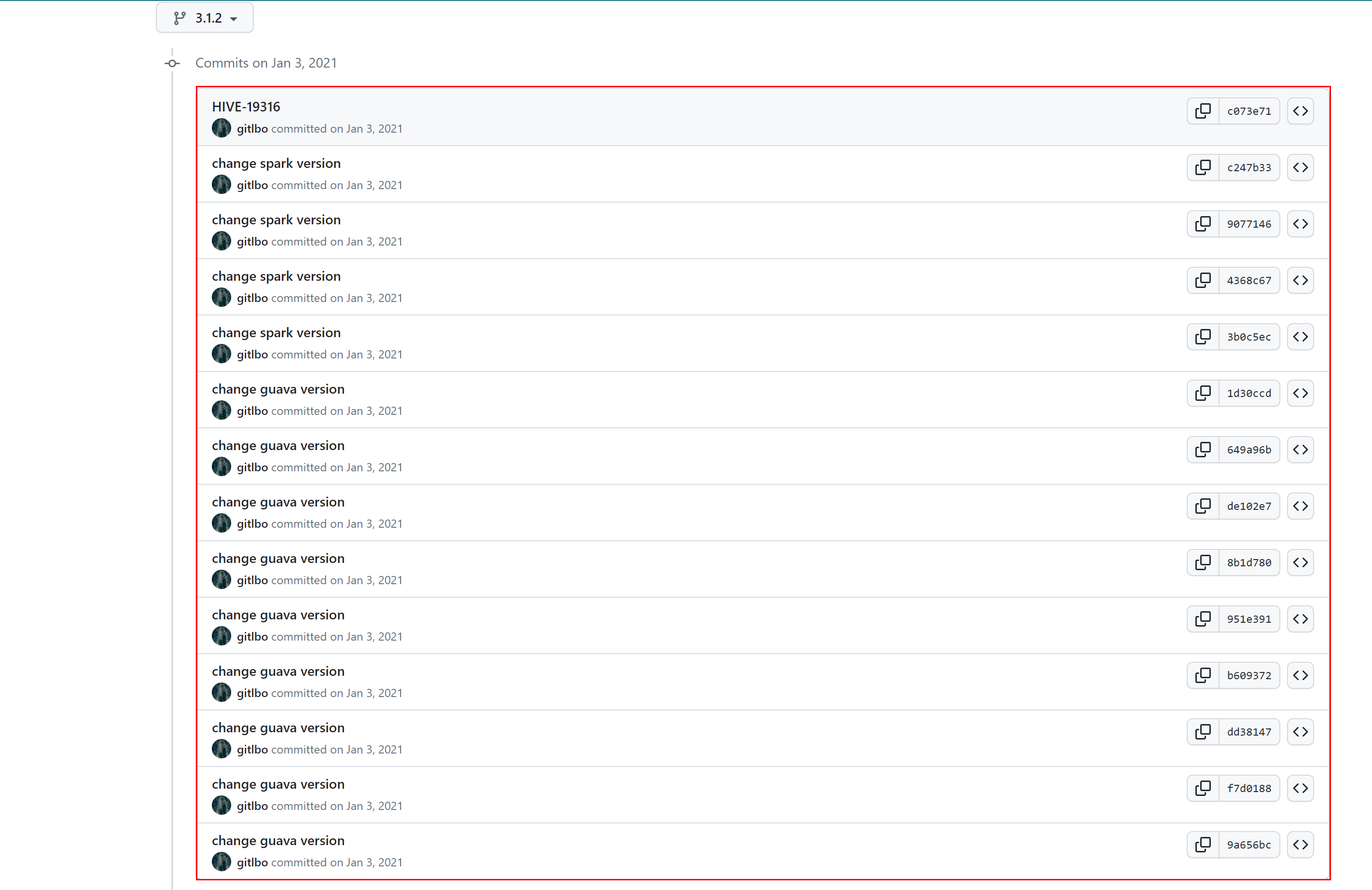

修改hive源码这个操作是核心操作,具体修改哪些源代码,参考:https://github.com/gitlbo/hive/commits/3.1.2

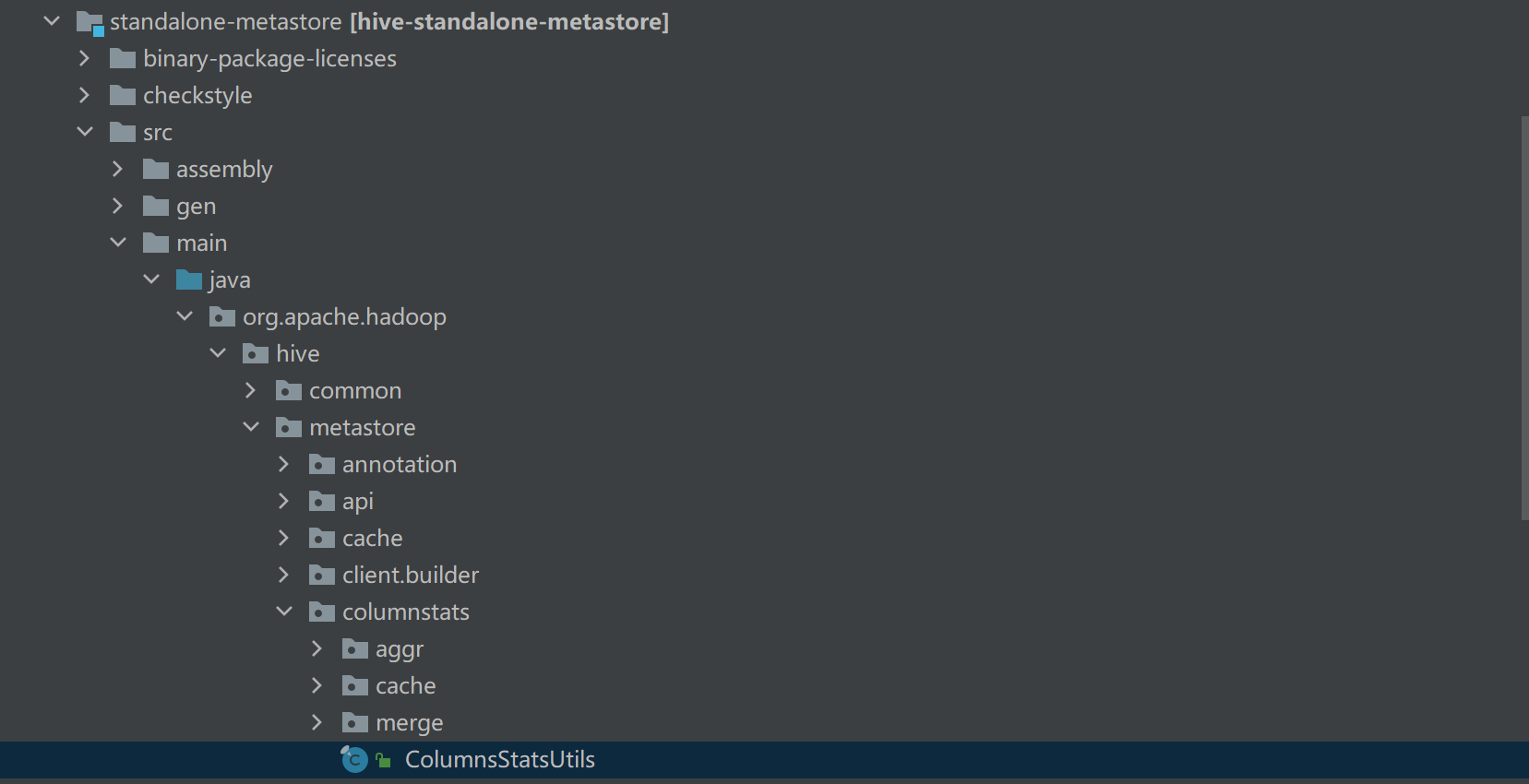

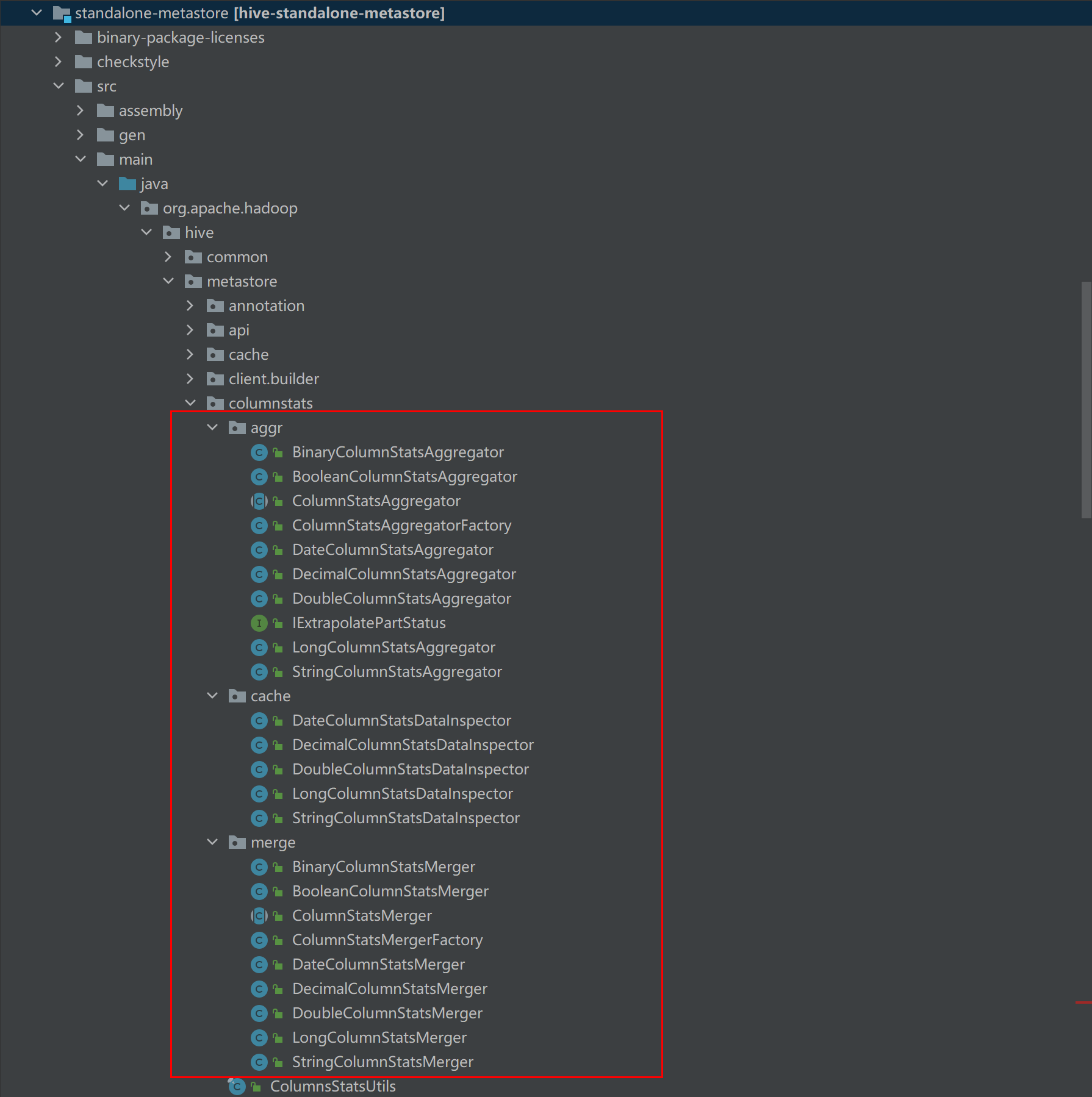

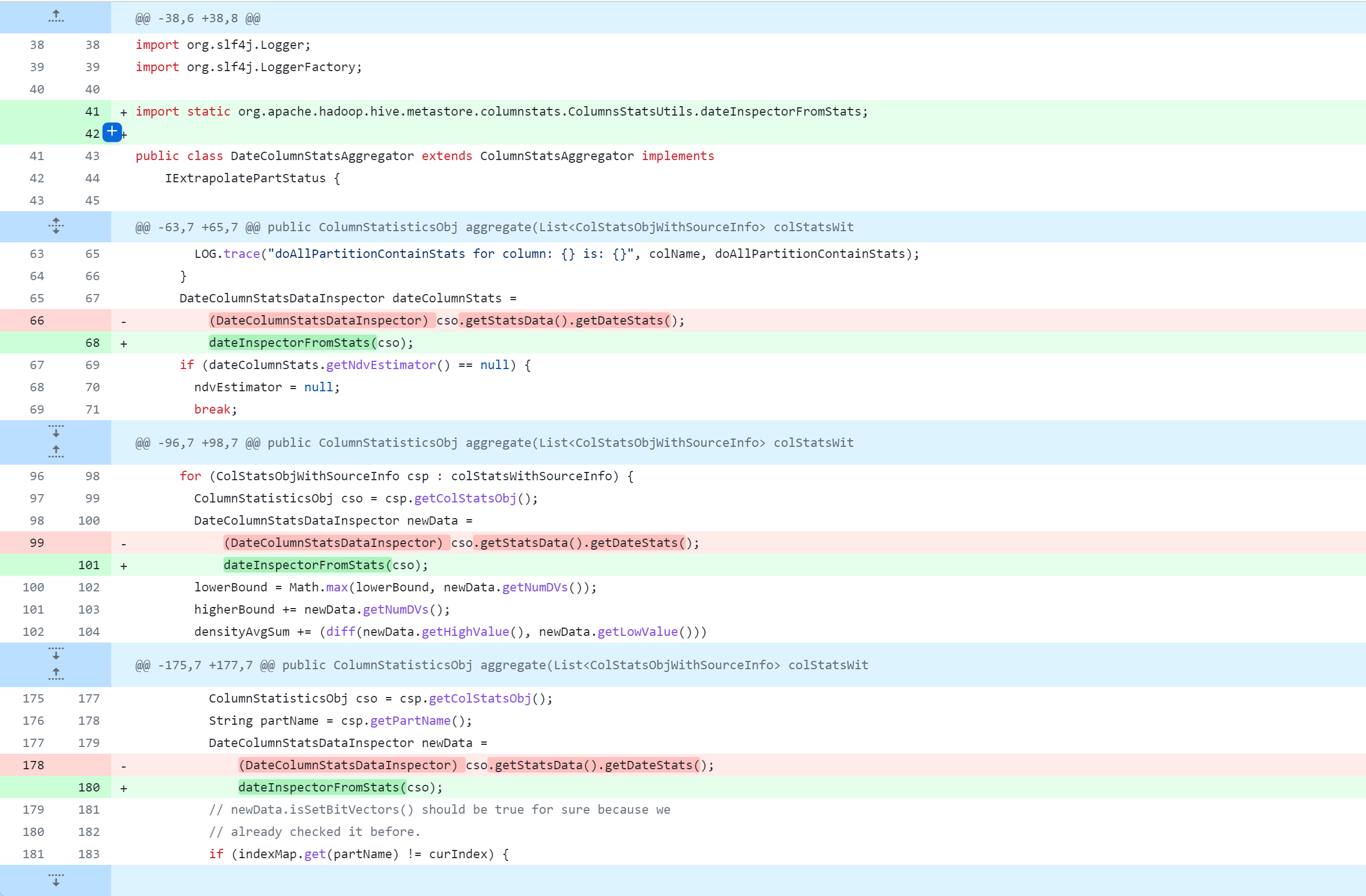

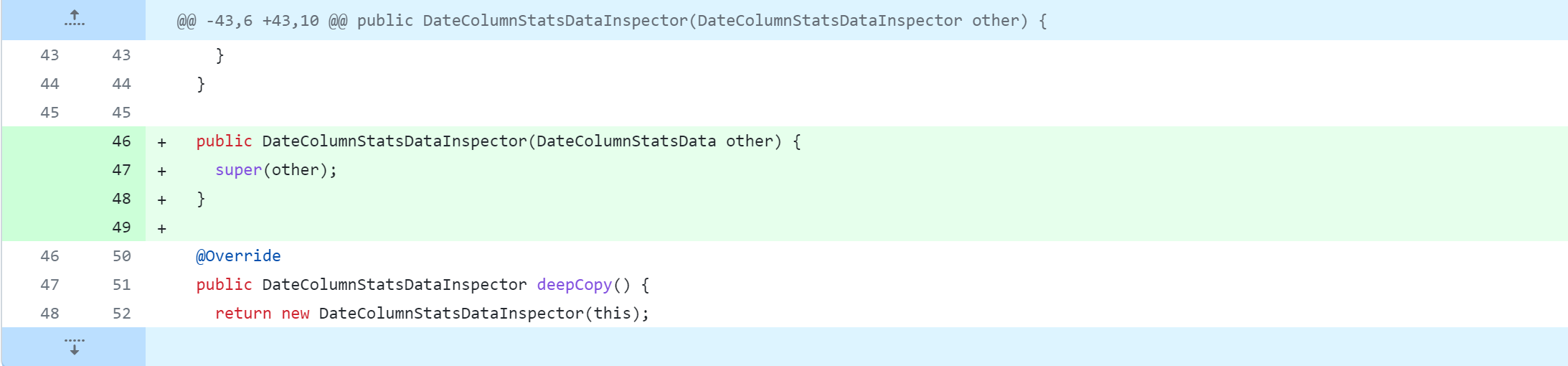

具体修改参考:https://github.com/gitlbo/hive/commit/c073e71ef43699b7aa68cad7c69a2e8f487089fd 创建ColumnsStatsUtils类 接着修改以下内容,具体修改参考以下截图说明

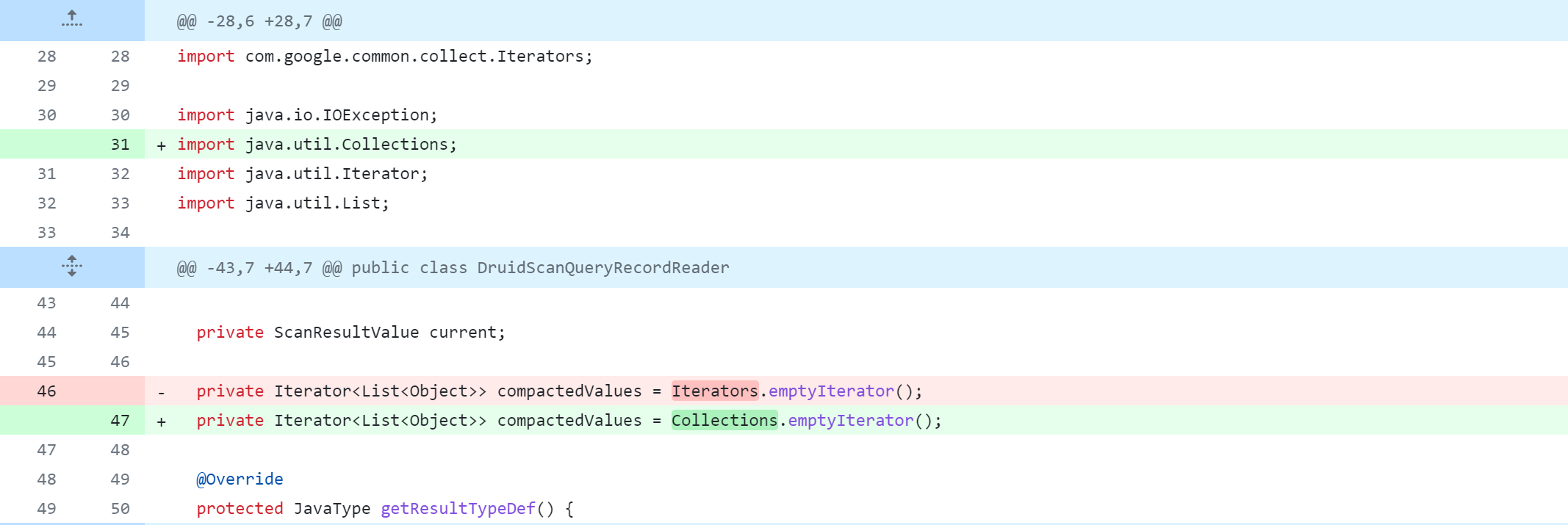

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/DateColumnStatsAggregator.java ql/src/test/org/apache/hadoop/hive/ql/stats/TestStatsUtils.java spark-client/src/main/java/org/apache/hive/spark/client/metrics/ShuffleWriteMetrics.java druid-handler/src/java/org/apache/hadoop/hive/druid/serde/DruidScanQueryRecordReader.java llap-server/src/java/org/apache/hadoop/hive/llap/daemon/impl/AMReporter.java llap-tez/src/java/org/apache/hadoop/hive/llap/tezplugins/LlapTaskSchedulerService.java llap-common/src/java/org/apache/hadoop/hive/llap/AsyncPbRpcProxy.java

对Hive源码修改完成后,执行编译打包命令: mvn clean package -Pdist -DskipTests -Dmaven.javadoc.skip=true mvn clean package -Pdist -DskipTests在执行编译打包命令过程中,肯定会有各种问题的,这些问题是需要解决的,期间遇到的各种异常请参考下方异常集合对比解决。 注意点 1.有时本地仓库中的缓存可能会引起依赖项解析错误。可以尝试清理该项目依赖的本地仓库中的maven包,这个命令会清理pom.xml中的包,并重新下载,执行以下命令: mvn dependency:purge-local-repository2.修改Pom.xml文件版本号,或更改代码、安装Jar到本地仓库后,建议关闭IDEA重新打开进入,防止缓存、或者更新不及时 异常集合注意:以下异常均是按照编译Hive支持Spark3.4.0过程中产生的异常,后来降低了Spark的版本。 异常11.maven会提示无法找到、无法下载某个Jar包、或者下载Jar耗时长(即使开启魔法也是) 例如:maven仓库找不到hive-upgrade-acid-3.1.3.jar与pentaho-aggdesigner-algorithm-5.1.5-jhyde_2.jar 具体异常如下,仅供参考: [ERROR] Failed to execute goal on project hive-upgrade-acid: Could not resolve dependencies for project org.apache.hive:hive-upgrade-acid:jar:3.1.3: Failure to find org.pentaho:pentaho-aggdesigner-algorithm:jar:5.1.5-jhyde in https://maven.aliyun.com/repository/central was cached in the local repository, resolution will not be reattempted until the update interval of aliyun-central has elapsed or updates are forced -> [Help 1]解决方案: 到以下仓库搜索需要的Jar包,手动下载,并安装到本地仓库 仓库地址1:https://mvnrepository.com/ 仓库地址2:https://central.sonatype.com/ 仓库地址3:https://developer.aliyun.com/mvn/search 将一个JAR安装到本地仓库,示例命令的语法: mvn install:install-file -Dfile= -DgroupId= -DartifactId= -Dversion= -Dpackaging= :JAR文件的路径,可以是本地文件系统的绝对路径。 :项目组ID,通常采用反向域名格式,例如com.example。 :项目的唯一标识符,通常是项目名称。 :项目的版本号。 :JAR文件的打包类型,例如jar。 mvn install:install-file -Dfile=./hive-upgrade-acid-3.1.3.jar -DgroupId=org.apache.hive -DartifactId=hive-upgrade-acid -Dversion=3.1.3 -Dpackaging=jar mvn install:install-file -Dfile=./pentaho-aggdesigner-algorithm-5.1.5-jhyde.jar -DgroupId=org.pentaho -DartifactId=pentaho-aggdesigner-algorithm -Dversion=5.1.5-jhyde -Dpackaging=jar mvn install:install-file -Dfile=./hive-metastore-2.3.3.jar -DgroupId=org.apache.hive -DartifactId=hive-metastore -Dversion=2.3.3 -Dpackaging=jar mvn install:install-file -Dfile=./hive-exec-3.1.3.jar -DgroupId=org.apache.hive -DartifactId=hive-exec -Dversion=3.1.3 -Dpackaging=jar 异常2提示bash相关东西,心凉了一大截。由于window下操作,bash不支持。 [ERROR] Failed to execute goal org.apache.maven.plugins:maven-antrun-plugin:1.7:run (generate-version-annotation) on project hive-common: An Ant BuildException has occured: Execute failed: java.io.IOException: Cannot run program "bash" (in directory "C:\Users\JackChen\Desktop\apache-hive-3.1.3-src\common"): CreateProcess error=2, 系统找不到指定的文件。 [ERROR] around Ant part ...... @ 4:46 in C:\Users\JackChen\Desktop\apache-hive-3.1.3-src\common\target\antrun\build-main.xml解决方案: 正常来说,作为开发者,肯定有安装Git,Git有bash窗口,即在Git的Bash窗口执行编译打包命令 mvn clean package -Pdist -DskipTests 异常3当前进度在Hive Llap Server失败 [INFO] Hive Llap Client ................................... SUCCESS [ 4.030 s] [INFO] Hive Llap Tez ...................................... SUCCESS [ 4.333 s] [INFO] Hive Spark Remote Client ........................... SUCCESS [ 5.382 s] [INFO] Hive Query Language ................................ SUCCESS [01:28 min] [INFO] Hive Llap Server ................................... FAILURE [ 7.180 s] [INFO] Hive Service ....................................... SKIPPED [INFO] Hive Accumulo Handler .............................. SKIPPED [INFO] Hive JDBC .......................................... SKIPPED [INFO] Hive Beeline ....................................... SKIPPED [ERROR] Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin:3.6.1:compile (default-compile) on project hive-llap-server: Compilation failure [ERROR] /C:/Users/JackChen/Desktop/apache-hive-3.1.3-src/llap-server/src/java/org/apache/hadoop/hive/llap/daemon/impl/QueryTracker.java:[30,32] org.apache.logging.slf4j.Log4jMarker▒▒org.apache.logging.slf4j▒в▒▒ǹ▒▒▒▒▒; ▒▒▒▒▒ⲿ▒▒▒▒▒▒ж▒▒▒▒▒з▒▒▒ [ERROR] [ERROR] -> [Help 1] [ERROR] [ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch. [ERROR] Re-run Maven using the -X switch to enable full debug logging. [ERROR] [ERROR] For more information about the errors and possible solutions, please read the following articles: [ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoFailureException [ERROR] [ERROR] After correcting the problems, you can resume the build with the command [ERROR] mvn -rf :hive-llap-server public class QueryTracker extends AbstractService { // private static final Marker QUERY_COMPLETE_MARKER = new Log4jMarker(new Log4jQueryCompleteMarker()); private static final Marker QUERY_COMPLETE_MARKER = MarkerFactory.getMarker("MY_CUSTOM_MARKER"); } 异常4编译执行到Hive HCatalog Webhcat模块失败 [INFO] Hive HCatalog ...................................... SUCCESS [ 10.947 s] [INFO] Hive HCatalog Core ................................. SUCCESS [ 7.237 s] [INFO] Hive HCatalog Pig Adapter .......................... SUCCESS [ 2.652 s] [INFO] Hive HCatalog Server Extensions .................... SUCCESS [ 9.255 s] [INFO] Hive HCatalog Webhcat Java Client .................. SUCCESS [ 2.435 s] [INFO] Hive HCatalog Webhcat .............................. FAILURE [ 7.284 s] [INFO] Hive HCatalog Streaming ............................ SKIPPED [INFO] Hive HPL/SQL ....................................... SKIPPED [INFO] Hive Streaming ..................................... SKIPPED具体异常: [ERROR] Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin:3.6.1:compile (default-compile) on project hive-webhcat: Compilation failure [ERROR] /root/apache-hive-3.1.3-src/hcatalog/webhcat/svr/src/main/java/org/apache/hive/hcatalog/templeton/Main.java:[258,31] 对于FilterHolder(java.lang.Class), 找不到合适的构造器 [ERROR] 构造器 org.eclipse.jetty.servlet.FilterHolder.FilterHolder(org.eclipse.jetty.servlet.BaseHolder.Source)不适用 [ERROR] (参数不匹配; java.lang.Class无法转换为org.eclipse.jetty.servlet.BaseHolder.Source) [ERROR] 构造器 org.eclipse.jetty.servlet.FilterHolder.FilterHolder(java.lang.Class)不适用 [ERROR] (参数不匹配; java.lang.Class无法转换为java.lang.Class) [ERROR] 构造器 org.eclipse.jetty.servlet.FilterHolder.FilterHolder(javax.servlet.Filter)不适用 [ERROR] (参数不匹配; java.lang.Class无法转换为javax.servlet.Filter) [ERROR] [ERROR] -> [Help 1] [ERROR] [ERROR] To see the full stack trace of the errors, re-run Maven with the -e switch. [ERROR] Re-run Maven using the -X switch to enable full debug logging. [ERROR] [ERROR] For more information about the errors and possible solutions, please read the following articles: [ERROR] [Help 1] http://cwiki.apache.org/confluence/display/MAVEN/MojoFailureException [ERROR] [ERROR] After correcting the problems, you can resume the build with the command [ERROR] mvn -rf :hive-webhcat看源码发现AuthFilter是继承AuthenticationFilter,AuthenticationFilter又实现Filter,应该不会出现此异常信息才对,于是手动修改源码进行强制转换试试,发现任然不行。 public FilterHolder makeAuthFilter() throws IOException { // FilterHolder authFilter = new FilterHolder(AuthFilter.class); FilterHolder authFilter = new FilterHolder((Class) AuthFilter.class); UserNameHandler.allowAnonymous(authFilter);解决方案: 在IDEA中单独编译打包此模块,发现是能构建成功的 [INFO] ------------------------------------------------------------------------ [INFO] BUILD SUCCESS [INFO] ------------------------------------------------------------------------ [INFO] Total time: 40.755 s [INFO] Finished at: 2023-08-06T21:39:17+08:00 [INFO] ------------------------------------------------------------------------于是乎产生了一个想法: 1.因为项目使用Maven进行打包(执行mvn package),再次执行相同的命令将不会重新打包项目 2.所以先针对项目执行clean命令,然后对该Webhcat模块打包,最后在整体编译打包时,不执行clean操作,直接运行 mvn package -Pdist -DskipTests。 注意:后来降低了Spark版本,没有产生该问题 编译打包成功经过数个小时的解决问题与漫长的编译打包,终于成功,发现这个界面是多么的美好。 [INFO] --- maven-dependency-plugin:2.8:copy (copy) @ hive-packaging --- [INFO] Configured Artifact: org.apache.hive:hive-jdbc:standalone:3.1.3:jar [INFO] Copying hive-jdbc-3.1.3-standalone.jar to C:\Users\JackChen\Desktop\apache-hive-3.1.3-src\packaging\target\apache-hive-3.1.3-jdbc.jar [INFO] ------------------------------------------------------------------------ [INFO] Reactor Summary for Hive 3.1.3: [INFO] [INFO] Hive Upgrade Acid .................................. SUCCESS [ 5.264 s] [INFO] Hive ............................................... SUCCESS [ 0.609 s] [INFO] Hive Classifications ............................... SUCCESS [ 1.183 s] [INFO] Hive Shims Common .................................. SUCCESS [ 2.239 s] [INFO] Hive Shims 0.23 .................................... SUCCESS [ 2.748 s] [INFO] Hive Shims Scheduler ............................... SUCCESS [ 2.286 s] [INFO] Hive Shims ......................................... SUCCESS [ 1.659 s] [INFO] Hive Common ........................................ SUCCESS [ 9.671 s] [INFO] Hive Service RPC ................................... SUCCESS [ 6.608 s] [INFO] Hive Serde ......................................... SUCCESS [ 6.042 s] [INFO] Hive Standalone Metastore .......................... SUCCESS [ 42.432 s] [INFO] Hive Metastore ..................................... SUCCESS [ 2.304 s] [INFO] Hive Vector-Code-Gen Utilities ..................... SUCCESS [ 1.150 s] [INFO] Hive Llap Common ................................... SUCCESS [ 3.343 s] [INFO] Hive Llap Client ................................... SUCCESS [ 2.380 s] [INFO] Hive Llap Tez ...................................... SUCCESS [ 2.476 s] [INFO] Hive Spark Remote Client ........................... SUCCESS [31:34 min] [INFO] Hive Query Language ................................ SUCCESS [01:09 min] [INFO] Hive Llap Server ................................... SUCCESS [ 7.230 s] [INFO] Hive Service ....................................... SUCCESS [ 28.343 s] [INFO] Hive Accumulo Handler .............................. SUCCESS [ 6.179 s] [INFO] Hive JDBC .......................................... SUCCESS [ 19.058 s] [INFO] Hive Beeline ....................................... SUCCESS [ 4.078 s] [INFO] Hive CLI ........................................... SUCCESS [ 3.436 s] [INFO] Hive Contrib ....................................... SUCCESS [ 4.770 s] [INFO] Hive Druid Handler ................................. SUCCESS [ 17.245 s] [INFO] Hive HBase Handler ................................. SUCCESS [ 6.759 s] [INFO] Hive JDBC Handler .................................. SUCCESS [ 4.202 s] [INFO] Hive HCatalog ...................................... SUCCESS [ 1.757 s] [INFO] Hive HCatalog Core ................................. SUCCESS [ 5.455 s] [INFO] Hive HCatalog Pig Adapter .......................... SUCCESS [ 4.662 s] [INFO] Hive HCatalog Server Extensions .................... SUCCESS [ 4.629 s] [INFO] Hive HCatalog Webhcat Java Client .................. SUCCESS [ 4.652 s] [INFO] Hive HCatalog Webhcat .............................. SUCCESS [ 8.899 s] [INFO] Hive HCatalog Streaming ............................ SUCCESS [ 4.934 s] [INFO] Hive HPL/SQL ....................................... SUCCESS [ 7.684 s] [INFO] Hive Streaming ..................................... SUCCESS [ 4.049 s] [INFO] Hive Llap External Client .......................... SUCCESS [ 3.674 s] [INFO] Hive Shims Aggregator .............................. SUCCESS [ 0.557 s] [INFO] Hive Kryo Registrator .............................. SUCCESS [03:17 min] [INFO] Hive TestUtils ..................................... SUCCESS [ 1.154 s] [INFO] Hive Packaging ..................................... SUCCESS [01:58 min] [INFO] ------------------------------------------------------------------------ [INFO] BUILD SUCCESS [INFO] ------------------------------------------------------------------------ [INFO] Total time: 38:22 min (Wall Clock) [INFO] Finished at: 2023-08-08T22:50:15+08:00 [INFO] ------------------------------------------------------------------------ 总结在整个编译、打包过程中,有2点非常重要: 1.相关Jar无法下载或者下载缓慢问题,一定要想方设法解决,因为Jar是构建的核心,缺一不可 2.Jar依赖解决了,但是任然存在可能的兼容性问题,编译问题,遇到问题一定要一一解决,解决一步走一步 |

【本文地址】

今日新闻 |

推荐新闻 |

代码如下:

代码如下:

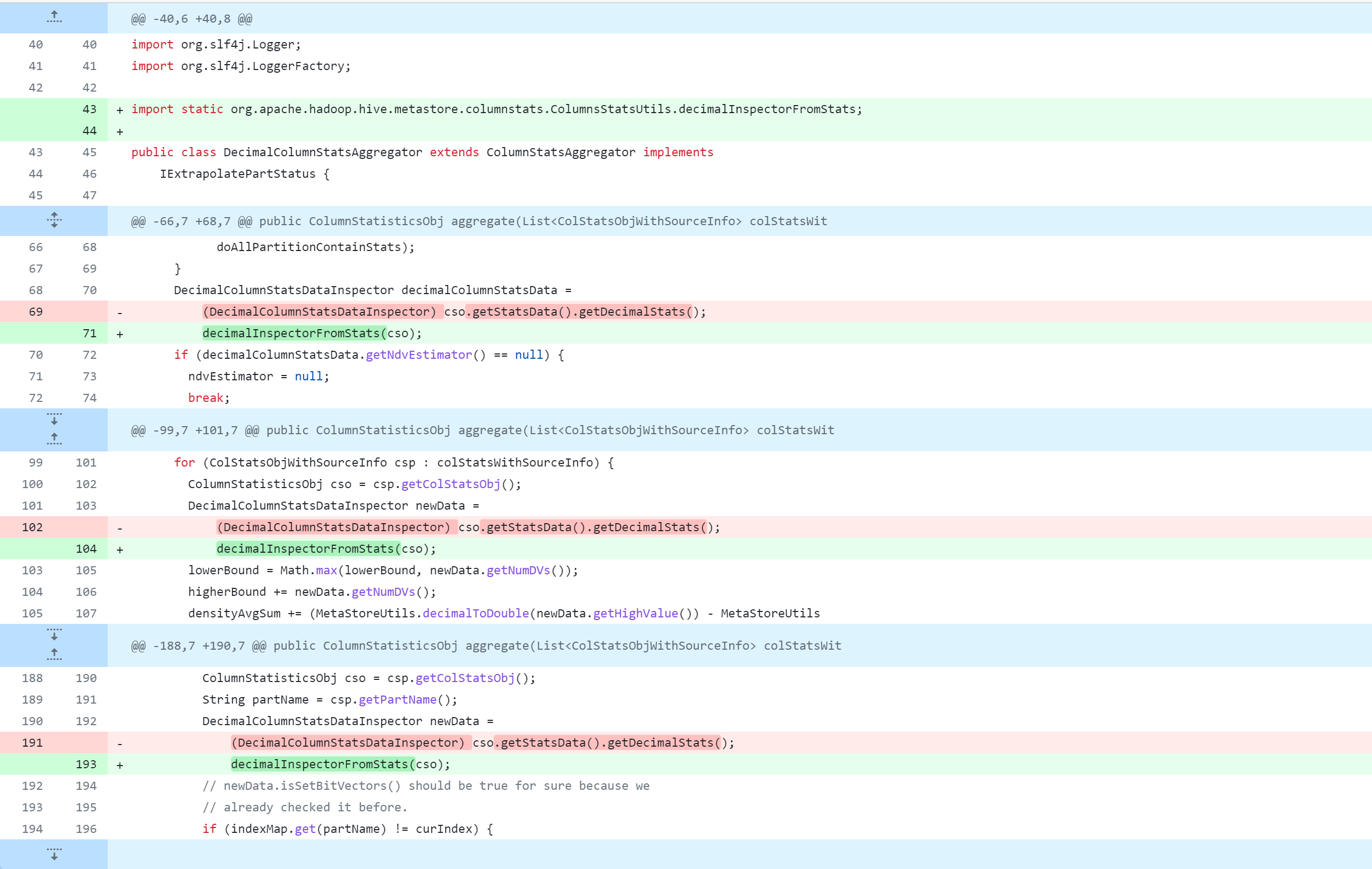

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/DecimalColumnStatsAggregator.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/DecimalColumnStatsAggregator.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/DoubleColumnStatsAggregator.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/DoubleColumnStatsAggregator.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/LongColumnStatsAggregator.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/LongColumnStatsAggregator.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/StringColumnStatsAggregator.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/aggr/StringColumnStatsAggregator.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/DateColumnStatsDataInspector.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/DateColumnStatsDataInspector.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/DecimalColumnStatsDataInspector.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/DecimalColumnStatsDataInspector.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/DoubleColumnStatsDataInspector.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/DoubleColumnStatsDataInspector.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/LongColumnStatsDataInspector.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/LongColumnStatsDataInspector.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/StringColumnStatsDataInspector.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/cache/StringColumnStatsDataInspector.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/DateColumnStatsMerger.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/DateColumnStatsMerger.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/DecimalColumnStatsMerger.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/DecimalColumnStatsMerger.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/DoubleColumnStatsMerger.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/DoubleColumnStatsMerger.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/LongColumnStatsMerger.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/LongColumnStatsMerger.java  standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/StringColumnStatsMerger.java

standalone-metastore/src/main/java/org/apache/hadoop/hive/metastore/columnstats/merge/StringColumnStatsMerger.java

ql/src/test/org/apache/hadoop/hive/ql/exec/tez/SampleTezSessionState.java

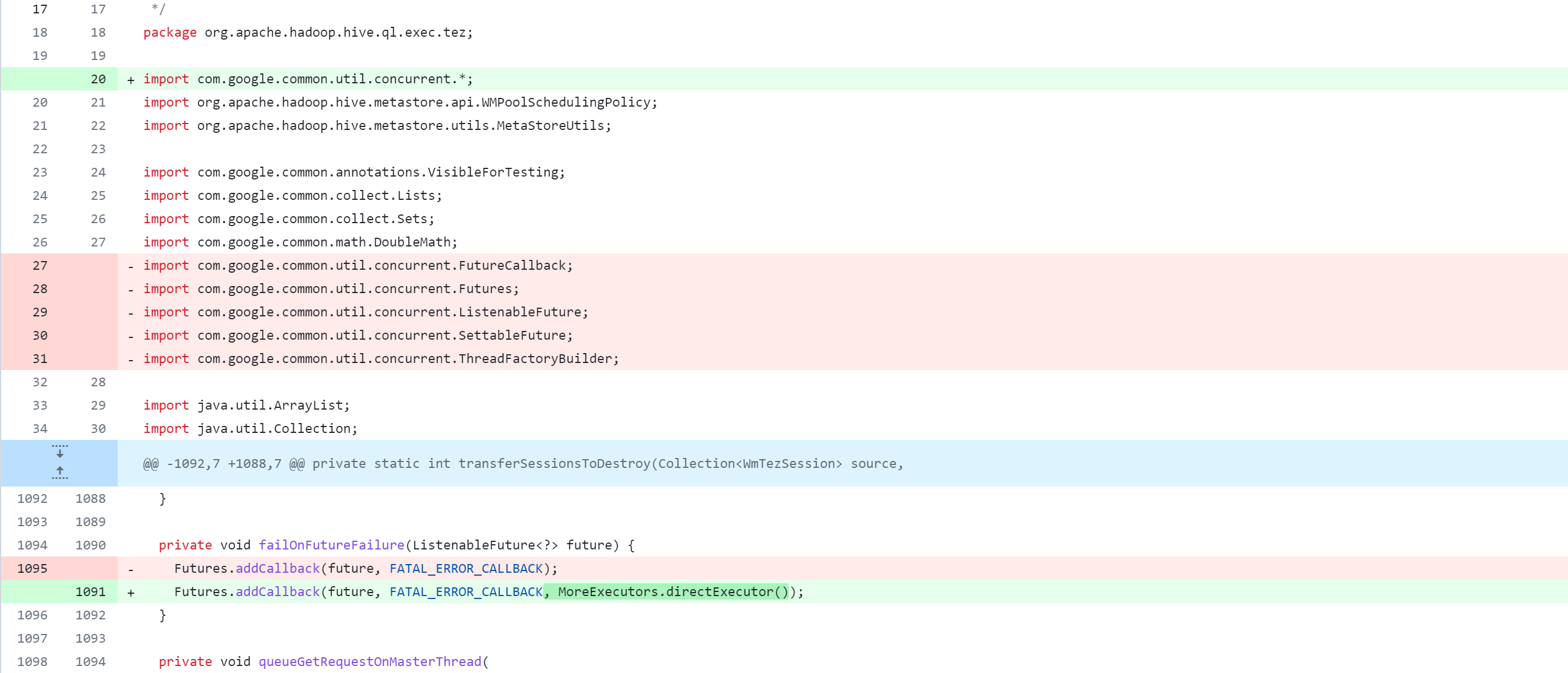

ql/src/test/org/apache/hadoop/hive/ql/exec/tez/SampleTezSessionState.java  ql/src/java/org/apache/hadoop/hive/ql/exec/tez/WorkloadManager.java

ql/src/java/org/apache/hadoop/hive/ql/exec/tez/WorkloadManager.java

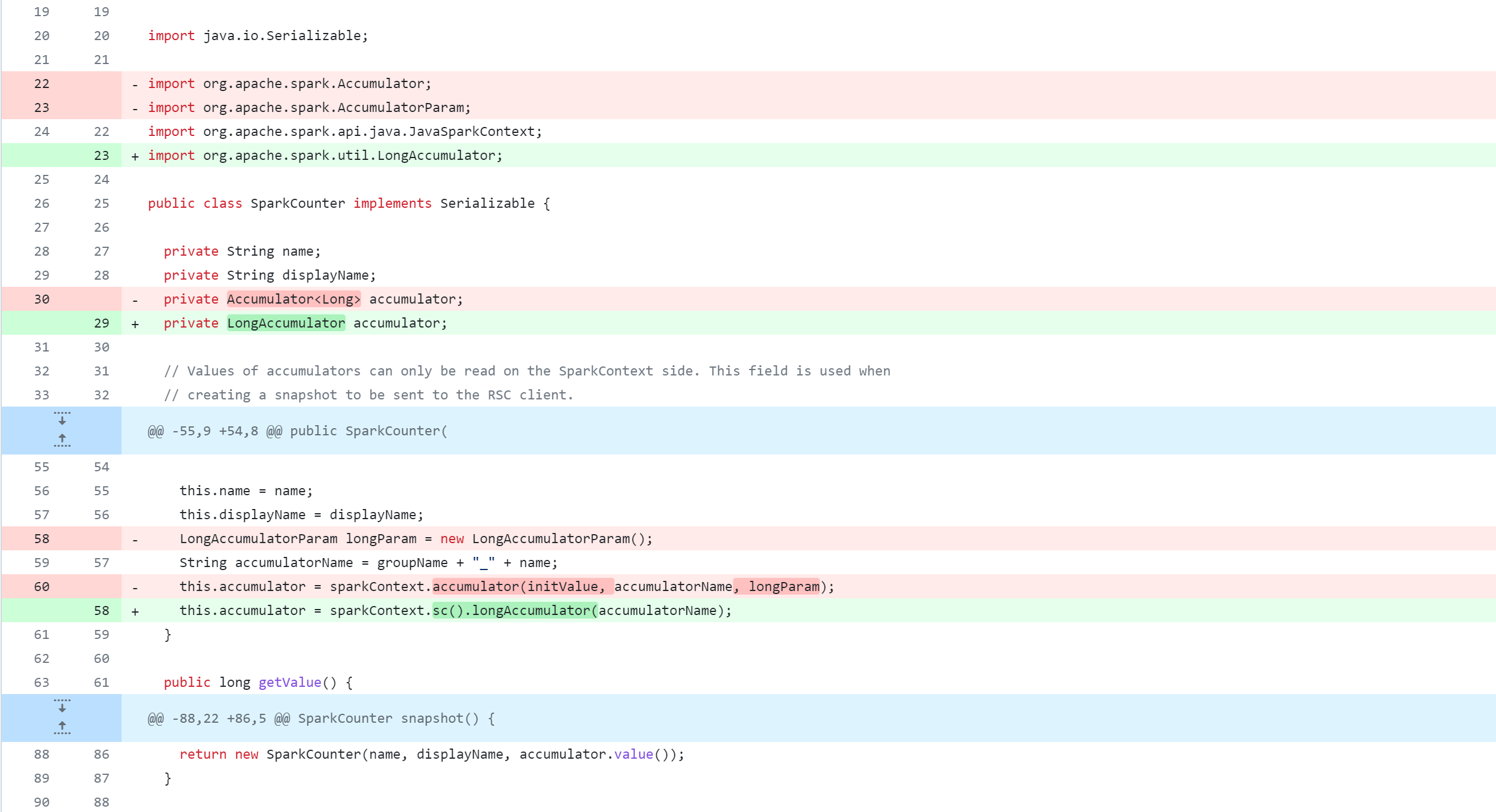

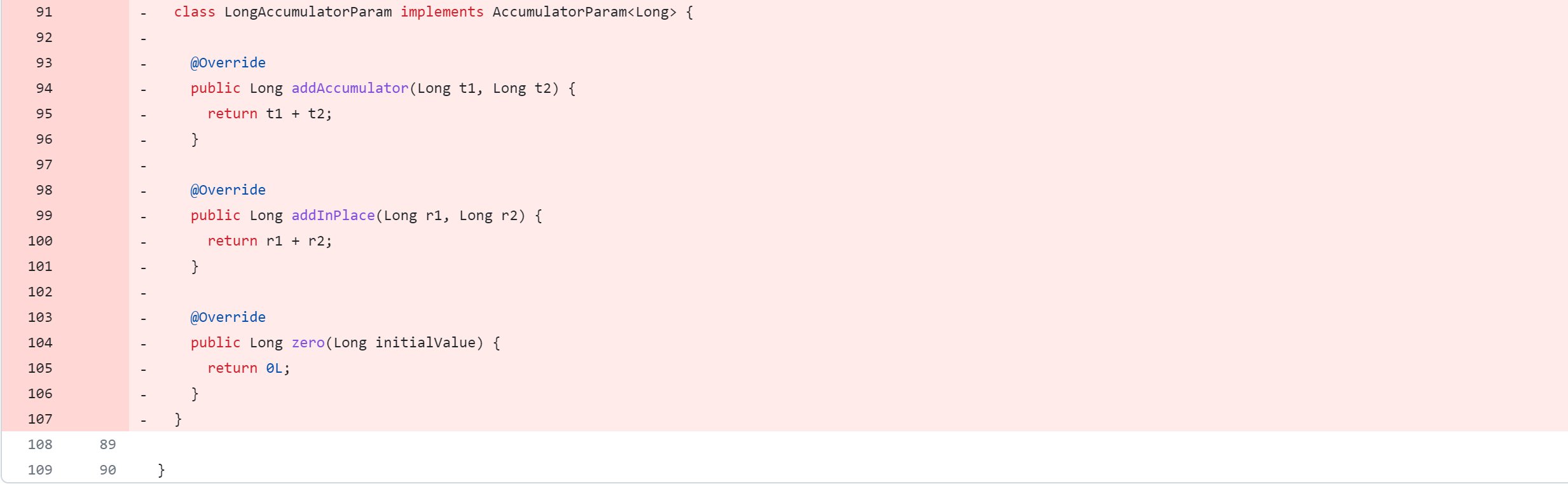

spark-client/src/main/java/org/apache/hive/spark/counter/SparkCounter.java

spark-client/src/main/java/org/apache/hive/spark/counter/SparkCounter.java

llap-server/src/java/org/apache/hadoop/hive/llap/daemon/impl/LlapTaskReporter.java

llap-server/src/java/org/apache/hadoop/hive/llap/daemon/impl/LlapTaskReporter.java  llap-server/src/java/org/apache/hadoop/hive/llap/daemon/impl/TaskExecutorService.java

llap-server/src/java/org/apache/hadoop/hive/llap/daemon/impl/TaskExecutorService.java